UAT is not the finish line. It never was. For D365 Finance & Operations teams, the real test starts the moment real users, real data, and real business processes meet an updated system for the first time.

Last week we wrote about how D365 Finance & Operations receives 18+ updates per year — not 2. The response was consistent: most teams hadn’t mapped the full update cadence and were testing for far less than what was actually hitting their environment.

This week we want to take that one step further — because even the teams that do test thoroughly run into the same problem.

UAT passes. Go-live is smooth. And then, two weeks later, something breaks in production that nobody saw coming.

This is not bad luck. It is a structural limitation of how UAT works — and understanding it is the difference between a QA strategy that protects your business and one that gives you false confidence.

The uncomfortable truth: UAT was designed to validate functionality in a controlled environment. Production is not controlled. And the gap between those two environments is exactly where D365 failures hide.

Why UAT passes and production breaks — the structural gap

UAT environments and production environments are fundamentally different in four ways that matter enormously for D365:

UAT environment: Small, clean, controlled dataset. Simulated user behaviour. Low transaction volumes. Integrations running at reduced frequency. Batch jobs rarely competing with interactive users.

Production environment: Years of real data at full volume. Organic, unpredictable user behaviour. Integrations running at full frequency. Batch jobs competing with live transactions. Month-end close adding peak load.

The issues that surface in production after a D365 update are almost never functional failures — those get caught in UAT. They are performance, volume, and interaction failures that only emerge under real conditions. A report that runs in 3 seconds on UAT data times out against 4 million production records. A batch job that completed cleanly in testing starts competing with live financial postings and causes deadlocks. An integration that worked at low frequency in UAT starts dropping records at production volume.

UAT proves the system works. It does not prove the system works at scale, under load, with real data, and alongside every other process running simultaneously. That proof only comes from production — and by then, finding and fixing the issue is significantly more expensive.

The 5 things that break most often after a D365 update

Based on what the D365 community consistently reports after major releases — and what we see across client environments — these are the failure patterns that appear most reliably:

01 — Batch jobs slowing or failing Batch jobs that ran in minutes during testing begin taking hours in production. Platform updates regularly change batch execution behaviour, scheduling priority, and concurrency handling. Under real production load — with financial posting, MRP, and integration jobs all competing — previously reliable batches start timing out or deadlocking.

02 — Integration failures at volume Integrations with external systems — payment gateways, warehouse management, third-party reporting tools — work cleanly at low frequency in UAT. At full production volume, API changes introduced in service updates or wave releases cause data to drop, duplicate, or arrive out of sequence. These rarely trigger clean error messages — they surface as data discrepancies days later.

03 — Custom code behaving differently Microsoft’s updates don’t overwrite custom code — but they change the base code your customisations interact with. A modified API, an updated form event, a changed posting routine — any of these can cause custom extensions to behave differently without throwing an error. The logic runs, the output is wrong, and nobody notices until a business process produces an unexpected result.

04 — Financial postings producing incorrect outputs Wave One’s journal framework changes, service update modifications to ledger processing, and quality patches that affect posting logic can all cause financial entries to behave differently from previous periods. This typically surfaces during month-end close — when the pressure to resolve it is highest and the time available is lowest.

05 — UI changes breaking automated scripts Fluent UI updates in wave releases change page layouts, form structures, and navigation paths. Any automated test scripts or workflow automations built against specific UI elements — field positions, button labels, selectors — break silently. The automation runs, reports success, and the actual process it was testing has not been validated.

The 3 scenarios that catch teams off guard every release cycle

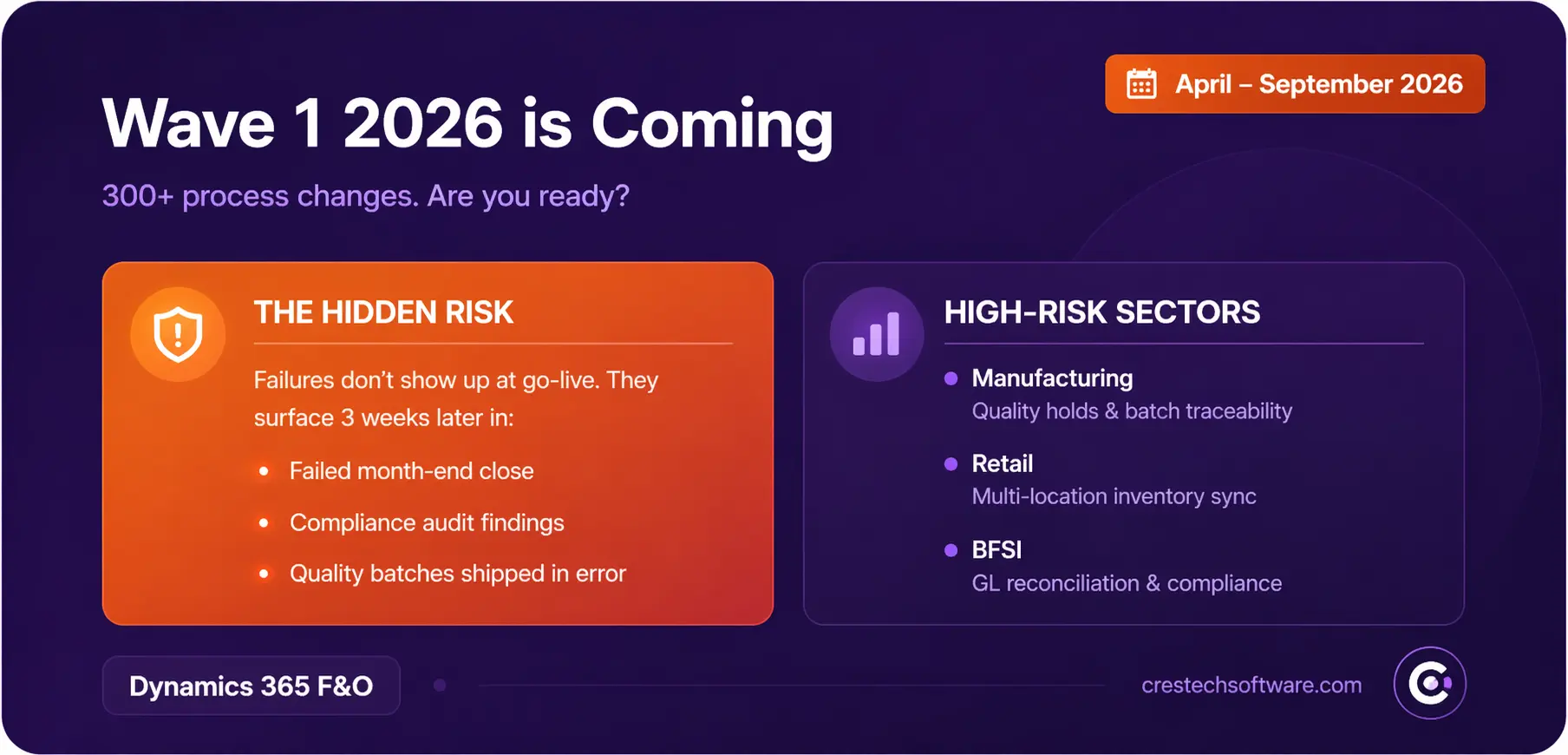

Scenario 1 — The month-end close that doesn’t balance Wave One’s journal framework update changes how GL entries behave across legal entities. UAT runs in a small, clean environment — everything posts correctly. Production hits with full transaction volumes, intercompany entries, and period-end automations all running simultaneously. The numbers don’t reconcile. The finance team spends two days finding a root cause that a structured post-update validation would have identified in two hours.

Scenario 2 — The warehouse dispatch that goes quiet A service update modifies warehouse management batch processing behaviour. UAT validates the picking and packing workflow using a small dataset at low volume. In production, the batch job that drives dispatch automations starts competing with financial posting jobs during peak hours. Dispatch slows. Orders queue. Nobody raises an IT ticket because the system hasn’t crashed — it’s just slower than it was last week.

Scenario 3 — The integration that drops records silently A quality update changes how D365 authenticates with a third-party integration. UAT uses the same integration at low frequency — it works. Production runs it at full volume — some records start failing authentication silently, falling into an error queue that nobody monitors. Three weeks later, a reconciliation report surfaces a data gap that traces back to the update date. The fix is simple. Finding it took three weeks.

Why this keeps happening — and what changes it

The root cause is not poor UAT execution. Most D365 teams run thorough UAT. The root cause is that UAT is designed to answer one question: does the system work? It is not designed to answer the question that actually matters: does the system work at production scale, under production load, with production data, alongside every other process running in parallel?

Answering that second question requires a different approach — continuous regression testing that runs in an environment that mirrors production as closely as possible, against every update, not just major releases.

The teams that consistently come through D365 updates without production failures have three things in common:

They have a regression suite built around their most critical business processes — not a checklist, a structured automated suite that runs on every update. They validate in a production-mirror environment — not a clean UAT environment with minimal data. And they treat every one of the 18+ annual updates as a trigger for structured validation — not just the two wave releases everyone plans for.

At Crestech, this is exactly how we build D365 QA programmes — starting with the processes most likely to break, building regression coverage that mirrors real production conditions, and running it against every update in the calendar. Not just the ones that feel important.

The question worth asking before your next update

Before the next D365 update hits your environment — whether it’s a wave release, a service update, or one of the 12 monthly quality patches that arrive automatically — ask this:

If something breaks in production two weeks from now, how long will it take your team to find it? How long to fix it? And what will it cost in business terms between those two moments?

UAT passing is not the answer to that question. A structured, continuous regression strategy is.

If your current D365 QA approach relies primarily on UAT and manual validation — we’d be happy to walk through what a continuous regression programme looks like in practice and where it would change your exposure across all 18+ annual updates.

Tags: #Dynamics365 #D365FnO #MicrosoftDynamics #UAT #QATesting #ERPTesting #RegressionTesting #WaveOne2026 #ReleaseManagement #D365Community #CrestechSoftware #FinanceAndOperations